AI and HPC: how they work together and why they need each other

High performance computing (HPC) is the use of aggregated processing power to perform complex or high volume computations. HPC systems are composed of nodes (machines) that are clustered together. These clusters process distributed workloads using parallel processing. In this article, you will learn how HPC and Artificial Intelligence (AI) can work together. We will also figure out why HPC and AI workloads are moving to the cloud.

AI and HPC: How HPC Helps You Build Better AI Applications

HPC systems typically have between 16 and 64 nodes, each with two or more central processing units (CPUs). This provides significantly greater processing power than traditional systems with only one or two CPUs in a single device. Additionally, nodes in HPC systems contribute memory and storage resources allowing for much greater storage and speed than traditional systems.

Many HPC systems incorporate graphics processing units (GPUs) and field-programmable gate arrays (FPGAs) to boost processing power. These are purpose-built processors that can run deep learning methods, central to most AI models, an order of magnitude faster than traditional CPUs.

There are several ways HPC systems can contribute to the development of artificial intelligence, including:

- Specialized processors — GPUs enable you to more efficiently process AI-related algorithms, such as those used by neural network models.

- Speed — parallel processing and co-processing significantly speed computational processes. This enables you to process data sets and run experiments in less time.

- Volume — large amounts of storage and memory enable you to run longer analyses and process significant volumes of data. This helps you improve the accuracy of artificial intelligence models.

- Efficiency — high performance computing combined with AI enables you to distribute workloads across available computing approaches and resources. This allows you to maximize resource use.

- Cost — HPC systems can provide more cost-effective access to big-data AI-powered high performance computing. If you use cloud resources, you can access HPC with pay-as-you-go pricing and avoid upfront costs.

The Convergence of HPC and AI

AI development goes hand in hand with HPC advances. HPC can better support AI model training than traditional systems can. Meanwhile, you can use AI to more intelligently queue and process workloads, maximizing the resources of HPC systems.

In addition to the processes HPC and AI perform, both also share a need for a high-performance infrastructure. These infrastructure requirements include large volumes of storage and computing power, high-speed interconnects, and accelerators. Users of both technologies understand this overlap, and many are trying to leverage these similarities and mutual benefits to develop more powerful tools.

However, one caveat is that these technologies are managed in very different ways. Where HPC workloads are often managed with Slurm or PBS Pro, AI applications are frequently hosted in containers and managed with Kubernetes. Overcoming this difference and integrating tooling is sure to be the next big step for these technologies.

AI and HPC Together

There are many ways in which AI and HPC are combined. A few of the most promising ones are covered below.

Higher-Level Programming

Until now, most HPC run programs were written in either Fortrans, C, or C++. Likewise, the accelerators that HPC uses are typically supported with C interfaces, libraries, and extensions. While other languages are possible to use, there isn’t the same support or available code for users to embrace.

However, AI is often based on other tools and languages. To integrate the two, users are beginning to develop HPC applications and interfaces that support AI tools, such as Python and MATLAB. This is a relatively easy process since these programs will still be based on C or C++ routines.

Containers

Containers and containerized workloads have taken the spotlight in recent years. Containers enable more agile deployments, provide higher availability, and offer better resource optimization than traditional applications and services.

Many AI applications are already hosted in and operated from containers. Partially due to this, people are looking at ways to host HPC processes in containers as well. HPC has already started to move to the cloud, offered as a service by vendors like Azure, AWS, and GCP. Containerization is a logical next step.

Big Data

Big data is everywhere, supported by seemingly endless devices, websites, and profiles. However, all of this data is only useful when there are tools equipped to process it. Both AI and HPC can help with this.

HPC systems can help you ingest, process, and transform data in real-time. This prepares data for analysis, which frequently incorporates AI. When the systems combine, data can be analyzed and modeled significantly faster. Additionally, since there is no need to transfer data between systems, it’s easier to secure, and you’re able to save significant amounts of bandwidth.

Why HPC and AI Workloads Are Moving to the Cloud

Many organizations are in the advanced stages of migrating workloads to the cloud, and HPC applications are no different. For large-scale AI projects, cloud-based HPC can be very compelling for organizations of all sizes. Here are a few reasons HPC is moving to the cloud:

Accessible Technology

In the early days of fast supercomputers, HPC facilities were set up and run on-premises. Costs of on-premises HPC were and still are high enough to ensure mainly big enterprises are able to leverage this technology. However, cloud-based HPC is breaking through this glass ceiling, enabling businesses of all sizes to gain access to AI high performance computing.

Enjoy the Best of Both Worlds

Since AI models require more and more computing power, HPC is often a major enabler of growth. Being able to move both AI and HPC to the cloud, businesses and research facilities leverage the power of the two technologies.

Multi-Cloud and Hybrid Clouds

Another major advantage is the ability to implement multi-cloud and hybrid cloud infrastructures. Instead of running strictly on-premises or strictly in the cloud, organizations can ensure high availability and scalability for their projects while maintaining control over architectures and costs and avoiding vendor lock-in.

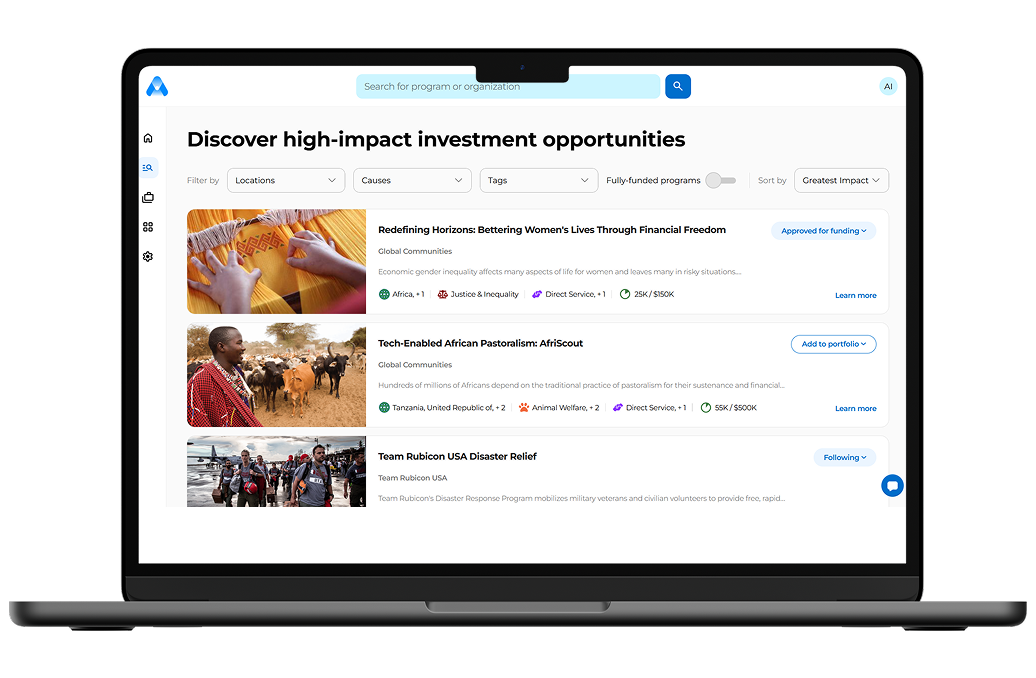

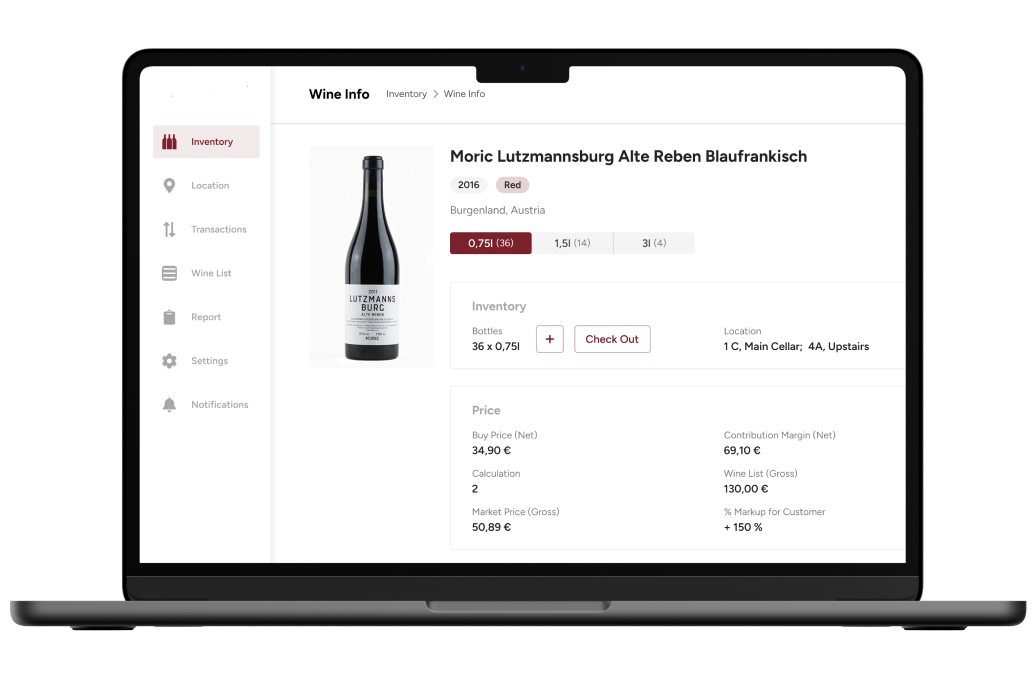

Dedicated Platforms and Tools

Cloud vendors are creating services that are tailored to HPC and AI. These services are designed to provide ease of use through user-friendly interfaces and cloud-based features that meet the needs of several use cases.

Expert Support

Cloud computing services often offer support, helping organizations gain a better understanding of how to run and manage their applications in the cloud. Organizations can leverage this support to choose the right cloud resources for their projects, optimize the environment and capacity, and ensure applications are running in an optimal manner.

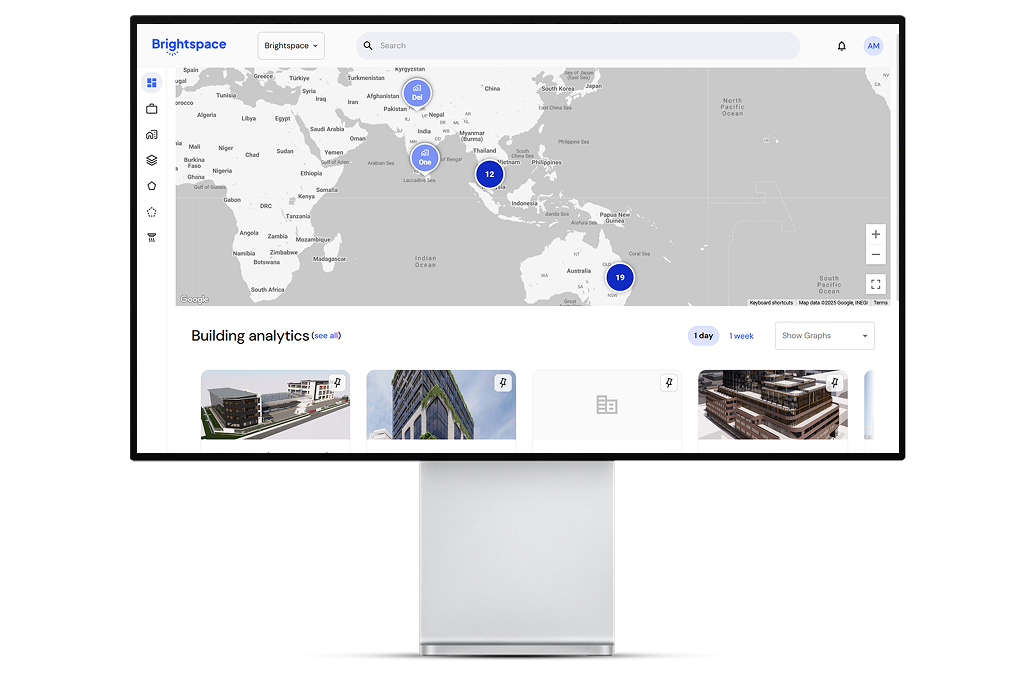

High End Capabilities

Cloud-based HPC often comes with built-in features or integrations to service that help organizations take advantage of high end technologies like high performance data analysis that can lead to high growth. Notable industries that can benefit from high performance data analysis include smart cities, healthcare and medicine, business intelligence, and cybersecurity.

How HPC is Accelerating AI in the Automotive, Biomedical, and Healthcare Industries

There are many real-world applications for HPC and AI, from meteorological predictions to fraud detection. Below are three examples that can give you a better idea of the benefits this pairing can provide.

Automotive

Most people are at least familiar with the idea of autonomous vehicles. These are vehicles that can self-correct lane placements, park without assistance, or automatically call authorities in case of an accident. Autonomous vehicles expand upon the existing AI capabilities that already help drivers navigate and stay safe.

To accomplish this, these vehicles must collect, process, and analyze significant amounts of sensor data every second. However, before that, the algorithms that these vehicles use to predict situations and determine actions must be trained.

This training can be done on HPC systems. After batch size is defined and models are trained, in-vehicle HPC systems can be used to run these models and process data in real-time. Without HPC, AI models cannot be run fast enough to be effective or functional.

Biomedical

As technology advances, biomedical research is growing more and more digital. There are now vast streams of biological data that researchers can access and analyze. This data is generated by a variety of technologies, from genome sequencing to cryo-electron microscopes. To process this data, many researchers are running HPC supported AI-based analytics.

One common use example of this is drug research. This research uses HPC to construct highly detailed, biological models that can simulate biological processes down to the subcellular level. These researchers then apply AI to determine how possible drug structures and combinations might affect human functioning.

Healthcare

Modern hospitals constantly generate data, from patient records to clinical trials, to medical decision making. Effectively analyzing and applying this data to improving patient care, clinician effectiveness, and hospital operations require advanced tools. For example, HPC systems and AI models.

One of the ways in which HPC and AI are being used is to create more reliable diagnostic tools. For example, image recognition models are being trained to accurately identify whether masses are benign or cancerous tumors. To accomplish this, researchers train models against large amounts of generated data that has been created by HPC systems. This data is used to refine the diagnostic model in the hopes that it can someday be applied to pre-screening patients or catching tumors earlier in development.

Conclusion

HPC and AI development services are a perfect match. When these two technologies are employed for the purpose of aiding each other, AI can gain the speed it needs to become smarter, and HPC can gain the smart capabilities needed to provide better results. Combined with big data, AI gets the data it needs to learn using a particular deep learning model. HPC helps the AI algorithm go through the data faster. These added capabilities can help improve AI applications in the healthcare, biomedical, and automotive fields.

Learn more about how we create AI-powered algorithms and where we use them. Our dedicated developers will gladly assist you with chatbot or machine learning development.

Thank you to Gilad David Maayan for contributing this article. Gilad David Maayan is a technology writer who has worked with over 150 technology companies including SAP, Samsung NEXT, NetApp and Imperva, producing technical and thought leadership content that elucidates technical solutions for developers and IT leadership.